TL;DR: Cookie scanners often measure cookie inventories, not the real compliance question: what loads before consent (including beacons, tag bootstraps, and other tracking tech beyond cookies). I tested 10 EU-focused scanner ecosystems on the same site, measuring only the pre-consent window (no clicks, no reject/accept flow). The outputs disagreed wildly - from “44 trackers” to “0 findings,” and one scanner didn’t even finish within an hour.

Two weeks ago I published a study of Danish E-Commerce Awards nominees. Most sites still triggered pre-consent tracking evidence.

After it went live, one of the nominated companies reached out. They were appreciative - and then added a line I hear constantly:

Their cookie scanner didn’t reveal any problems.

Their supplier had told them it was compliant.

They thought they were compliant.

That’s the moment this Weekly is built around.

Because if your scanner says “all good” while your site still makes tracking calls before anyone has chosen anything, you don’t have compliance - you have false confidence. And false confidence is what keeps leakage “normal” for years.

“A clean scanner report can be the most dangerous outcome - it convinces the business to stop looking.”

What scanners usually measure vs what compliance actually is

Most scanners are designed to answer the question teams want answered:

“What cookies exist, and can we classify them into a declaration?”

That’s useful documentation. It is not the compliance boundary.

The compliance boundary is timing at the device access layer: whether tracking identifiers or tracking calls happen before a visitor has had a real choice.

And the modern problem is that tracking isn’t “just cookies” anymore. A site can initialize the marketing stack, send event data, and only set identifiers later - producing a scanner report that looks calm while the browser has already “phoned home.”

“Banners don’t create compliance - architecture does.”

What the data showed

In the nominee dataset, pre-consent leakage wasn’t exotic - it was standard.

The most common pre-consent beacon host was Google Tag Manager, and the surrounding ecosystem followed the same gravity: email tooling, platform telemetry, ad pixels, analytics stacks.

Here’s the part that matters for teams reading this: if your scanner is not explicitly measuring pre-consent network activity, it can miss exactly the most common failure mode.

Most common pre-consent beacon hosts (unique domains share)

- Google Tag Manager (GTM) - 92/137 (67.15%)

- Klaviyo - 40/137 (29.20%)

- Shopify telemetry - 38/137 (27.74%)

- Meta - 37/137 (27.01%)

- Google Analytics - 36/137 (26.28%)

Most common tracking cookies observed pre-consent

- DoubleClick test_cookie - 13.14%

- Meta _fbp - 12.41%

- DoubleClick IDE - 8.03%

- LinkedIn bcookie - 8.03%

- LinkedIn li_gc - 8.03%

- LinkedIn lidc - 8.03%

These are not “edge trackers.” This is mainstream MarTech, firing before the visitor has done anything.

“If GTM loads before choice exists, the rest of the stack is already out of your control.”

Why scanners miss pre-consent beacons

This isn’t about accusing vendors of intent. It’s about acknowledging methodology limits in a modern web.

If you remember only one thing from the dataset, remember this: the pre-consent stack isn’t ‘mystery trackers’ - it’s the most standard tools in e-commerce.

Most scanners struggle with one or more of these realities:

- They’re cookie-inventory heavy, but tracking can be “cookieless until later.”

- They don’t consistently isolate/report the pre-consent window.

- They under-execute dynamic behaviors: async injections, timers, soft navigations, embedded widgets.

- They don’t model how marketing teams actually ship: new scripts added outside the gate, app updates, tag changes.

And there’s a point that feels taboo but is necessary to say:

Sometimes the consent layer itself becomes part of the leak.

In the Awards write-up I called out the pattern of pre-consent third-party calls to CMP-provider infrastructure - the “gate” talking externally before the visitor has chosen.

“Nothing found” doesn’t mean “nothing happened.” It can mean “we didn’t measure the pre-consent window.”

The test that settles the argument

You don’t need a debate about definitions. You need evidence.

Open a clean session and test three states:

- Pre-consent (do nothing)

- Reject all

- Accept all

Then compare:

- network requests (beacons / endpoints)

- tag bootstrap scripts (especially tag managers)

- identifiers (cookies + localStorage)

If you want a quick first step before doing deeper forensics, run the domain through our free Privacy Scanner first. It’s built around the same baseline principle: what evidence appears before a valid consent signal (client-side beacons + cookies), not just what can be documented later.

If it flags pre-consent evidence, the work is rarely “rewrite the banner.” The work is “move execution order so the marketing stack can’t initialize early.”

That’s the manual ground-truth method. For the scanner benchmark below, I only compared what each tool reports in the pre-consent window.

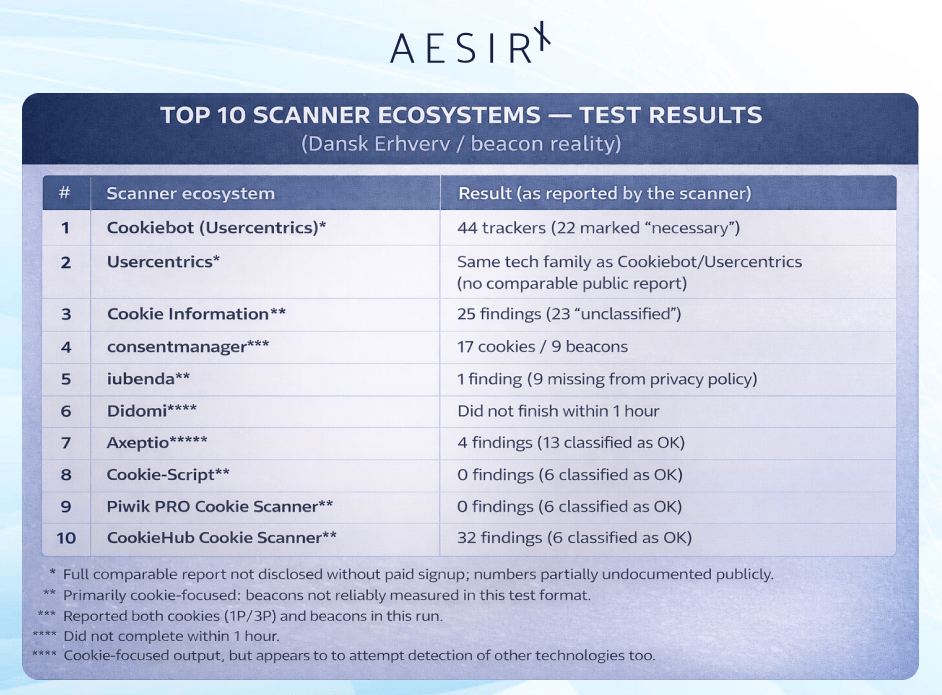

The 10 scanner ecosystems I tested

I tested ten of the most commonly used EU / EU-focused scanning ecosystems against “beacon reality” - using the same baseline every time:

What happens on the site before consent (the pre-consent window).

So I stopped arguing in theory and ran the scanners against a site that contains both cookies and beacons - because that’s where scanners either tell the truth or expose their limits.

Here are the results as each tool presented them (note: the labels and methodology vary a lot, so this is best read as “what problems the scanner surfaced,” not as a standardized measurement):

The test site and the baseline reality

All tests were run against the same site: Dansk Erhverv (the organization behind the Danish E-Commerce Awards), using https://www.danskerhverv.dk/.

In our reference privacy scan, we observe 1 third-party cookie and 9 beacons on the site. That makes it a useful “reality check” target: it contains both cookie activity and beacon activity, so you quickly see whether a scanner actually detects tracking technologies beyond cookies.

On this site:

- Before consent, the website makes requests to 18 distinct third-party hosts.

- Potential third-party web beacons are sent to 5 distinct hosts.

- We observe 7 first-party cookies and 1 third-party cookie.

- The site has 92 distinct third-party hosts whitelisted (allowlisted).

- Server-side Google Tag Manager is loaded prior to consent via load.sgtm.danskerhverv.dk - which can circumvent detection for scanners that rely on cookie-only logic or third-party heuristics because the third-party destination is hidden behind a first-party endpoint.

Why the results don’t line up (and why that’s the point)

Each scanner uses a different methodology and vocabulary, and they don’t agree on what counts as a “tracker,” what is “necessary,” what is “marketing,” or what should be classified at all. That makes it extremely difficult for a company to compare results or to understand whether the scanner is validating consent-before-tracking - or merely generating a cookie inventory.

The goal of this chapter was not to crown a “winner.”

It was to identify whether these tools surface the kinds of real-world issues that actually matter in the pre-consent window.

Friction and dark patterns in the scanning experience

A surprising number of these ecosystems make it hard to get a clear answer:

- Several tools require account creation with name, company, and email before showing anything useful.

- Only Cookie-Script and Piwik PRO (and our reference privacy scanner) offered a free scan without registration.

- Some scanners even require consent to marketing or similar conditions to download a report (for example CookieHub), which is problematic in itself - especially for tools positioned as privacy compliance infrastructure.

The hard conclusion

Let’s be honest: for most companies, it’s extremely difficult to get a clear, actionable overview from many of these scanners.

Some don’t measure beyond cookies. Some hide key details behind signup or paid plans. Some classify questionable findings as “OK” without explaining what actually happened pre-consent. And when server-side tag management enters the picture, several scanners simply lose the trail entirely.

Which brings us back to the core point of this Weekly:

If a scanner isn’t explicitly measuring the pre-consent window - what requests and identifiers happen before the visitor chooses - then “we’re compliant” is not a conclusion. It’s a guess.

The moment teams need to recognize

Most companies aren’t trying to cheat. They’re trying to be safe.

They buy a CMP. They run a scanner. The report looks calm. They take a screenshot. They move on - because they have revenue targets, campaigns, and a backlog.

And that is exactly how pre-consent tracking becomes normal: not through intent, but through misplaced trust in the wrong measurement.

So here’s the value-based takeaway that actually matters:

If you operate in Denmark/EU, don’t outsource truth to a PDF report.

The standard isn’t “we documented cookies.”

The standard is “we didn’t start tracking before the visitor chose.”

If you want, run your domain through the free Privacy Scanner and message me the pre-consent result. I’ll tell you plainly whether you’re looking at a small configuration gap - or a structural gate failure that needs engineering ownership.

Ronni K. Gothard Christiansen

Technical Privacy Engineer & CEO @ AesirX.io